Is One Lineup Better Than Another?

- 13 Comment

One part of Wayne Winston’s new book Mathletics that I didn’t really like was the way he compared raw lineup data to determine if one lineup is better than another. After thinking about it more, I think the real reason I don’t like his method is because he compares the lineups’ net points per minute (pts/min).

I’m a big proponent of points per possession (pts/poss), so I wanted to look at how we could compare lineups using raw pts/poss data. I think it is important to put emphasis on raw, as we’re not trying to control for strength of opponent, home court advantage, etc. Although these things have a real impact on the way our data is generated, the goal is to maintain simplicity while still being useful, just like the method Wayne proposes in his book.

Calculating the Difference Between Two Lineup’s Net Pts/Poss

As long as you have the data, comparing the difference between two lineups’ mean net pts/poss isn’t hard (I’m using hard here relatively, of course; for my mom, this would be really hard). That said, it helps to have something that makes the calculations easy. Therefore, I have created an R function compare_lineups() that you can find in compare_lineups.R.

This function takes four arguments:

- l1.o: vector of points scored on each offensive possession by lineup #1

- l1.d: vector of points allowed on each defensive possession by lineup #1

- l2.o: vector of points scored on each offensive possession by lineup #2

- l2.d: vector of points allowed on each defensive possession by lineup #2

Using this data, the compare_lineups() function calculates and reports the following:

- The mean and standard error for the difference in the lineups’ mean net pts/poss

- A 95% confidence interval for this difference

- The z-score of the difference and estimated probability lineup #1’s mean net pts/poss is greater than lineup #2’s mean net pts/poss

This function also returns these statistics in the form of a list. This allows you to do cool stuff like graph the plausible values for the difference between each lineup’s mean net pts/poss.

Application to the Example From Mathletics

To illustrate his method, Wayne gives an example of how he compares lineups on page 225 of Mathletics. In his example, he compares two Cavs lineups from the 2006-2007 season. The end result of his method is that we estimate there to be a >99% chance that the superior lineup has a higher mean net pts/48 minutes than the inferior lineup.

With compare_lineups(), we can now compare the lineups’ mean net pts/poss. To do this, you’ll first need to load the code in compare_lineups.R. Once loaded, you can run the following command to compare the lineups:

res <- compare_lineups(c(rep(0,39+45),rep(1,2+5),rep(2,29+37),rep(3,2+5)), c(rep(0,52+42),rep(1,3+2),rep(2,31+26),rep(3,6+1)), c(rep(0,87+30),rep(1,15+4),rep(2,66+23),rep(3,9+2)), c(rep(0,29+80),rep(1,3+4),rep(2,18+70),rep(3,9+18)))

Running this code will produce the following output:

For Lineup 1 – Lineup 2:

–> Mean: 28.5

–> Std Err: 15.3

–> 95% CI: (-1.5, 58.5)

–> Z-score: 1.86

–> Pr(L1 > L2): 0.9686

This output shows us that the estimated difference between the lineups’ mean net pts/poss is 28.5 pts/100 poss with a standard error of 15.3 pts/100 poss. A 95% confidence interval for the mean difference is (-1.5, 58.5) pts/100 poss, which means we have 95% confidence that the true mean difference is somewhere in this interval.

We’re specifically interested in the probability that the mean net pts/poss of lineup #1 is greater than the mean net pts/poss for lineup #2 (aka a one-tailed test for that inner stat nerd deep inside of you), so the z-score of 1.86 allows us to estimate that this probability is 0.97. In other words, we come to the conclusion that lineup #1’s mean net pts/poss is statistically significant from lineup #2’s mean net pts/poss.

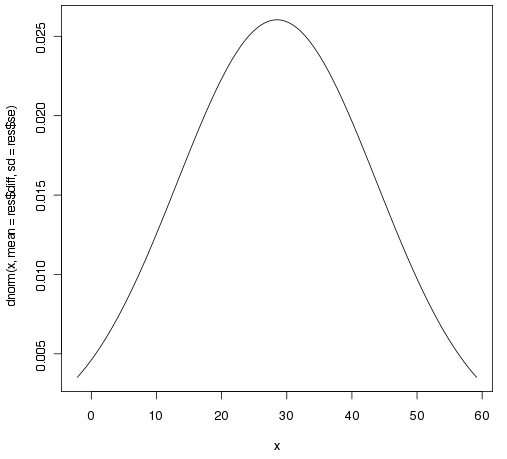

We can also see this visually with a graph. In R,

curve(dnorm(x,mean=res$diff,sd=res$se),from=res$diff-2*res$se,to=res$diff+2*res$se)

will generate the following graph of the difference in the lineups’ mean net pts/100 poss:

In this case we arrive at the same conclusion that lineup #1 is better than lineup #2. Depending on the question you’re trying to answer, like determining which lineup you should play in a given situation, I think this really just provides us with a good starting point. It is important to try and understand why this is showing up in the data, as we want to ensure that, for example, the lineup with the better data isn’t showing this advantage simply because they’re playing inferior opponents.

Summary

My hope is that this code will help make it easier to compare lineups on a per possession basis, although you still have to go through and extract the data to use it. This is still just a starting point for comparing lineups, but it does give some evidence with which we want to dig deeper to understand what makes one lineup better than another in a given situation.

13 Comments on this post

Trackbacks

-

Jose A. Martinez said:

Nice post, Ryan. Just a note: It seems that standard error is relatively very large. This complicates statistical comparisons. Measures of effect size would help to interpret findings. You have considered z-score, but other may would help too (such as Cohen’s “d”). Anyway, in order to interpret your results, they are somewhat uninformative, because we know with high probability the direction of the effect (lineup#1 is better than lineup#2), but we know with high imprecision the size of the effect (so we are not sure about the strengh of the effect, due to the high variability). I think this latter issue is important because we should move from statistical significance to practice significance in these type of comparisons.

Congratulations for you work.

Jose.October 6th, 2009 at 12:24 pm -

Ryan said:

Jose, your point about the high variability is what made me wonder how we have statistical significance with so “few” possessions. The large difference in mean net pts/poss means that even with this large amount of variability we can draw this conclusion.

The 95% confidence interval goes as high as 58.5, and ranges over 60 points, further showing the uncertainty. I’m interested to run these calculations on other lineups to see what sort of ranges exist, and I think a Bayesian approach may help us shrink this confidence interval to more reasonable amounts.

October 6th, 2009 at 12:53 pm -

Jose A. Martinez said:

Ok Ryan, please inform us about the Bayesian approach. Currently, I am starting to learn about it, so I will be very interested in your empirical application.

October 6th, 2009 at 1:07 pm -

doozer said:

just a sidenote: a test of significance as you did to compare L1 with L2 does not give you the probability whether L1 > L2. It just gives you the probability of the appearance of the data that you HAVE given that the null hypothesis that the two lineups are NOT different. so in your case i assume this probability would be 0.03. Nevertheless this does not give you a probability about L1 and L2 being different! It just states that given your data it is highly unlikely, that the two lineups are the same.

If I got you all wrong, please let me know.

Cheers,

JensOctober 6th, 2009 at 4:51 pm -

Ryan said:

Jens, you’re right that we don’t typically make probability statements when working with this sort of problem classically. I sort of bastardized things and simply assumed that these were true normal distributions with the specified means and std deviations to “approximate” that probability.

So you are correct when you say that the probability that we see this result given the null being true (the difference being equal to 0) is 0.03. What I did isn’t great form, and it isn’t exactly accurate, but it is simply what I’m using as my best guess. Sort of how Wayne uses it in his book.

October 6th, 2009 at 5:29 pm -

David said:

Ryan, I think Winston is on target in using ppm rather than ppp as his base structure of lineup comparisons. While ppp allows apples-to-apples comparisons between individuals in lineups that play different styles, one of the important comparisons from one lineup to another is the advantages allowable by the style of play that group is able to play.

For example, Winston’s work with the Mavs illuminated the fact that the relatively undersized Nowitzki-Bass-Terry-Barea-Kidd lineup was comparatively incredibly successful for them. Obviously if you merely compare one of their possessions against one performed by a bigger lineup, you might not see as much of an advantage. But a secondary benefit gained by having smaller faster players who push the action is that a small advantage each possessions can be increased to much greater effect when the lineup can multiply possessions by playing at a much faster pace. Meanwhile some bigger lineups have no such option.

The net conclusion is that it’s better to use the ppm standard when comparing full lineups against each other, because it allows the ability to run to be factored into the equation properly.

October 6th, 2009 at 11:34 pm -

Ryan said:

David, that is an interesting point you bring up. I still think you want to look at pts/poss instead of pts/min, but I can certainly see where in the case of two lineups that have equal pts/poss you would prefer the lineup that can play at a faster pace and get you more possessions.

I’ll definitely give this some more thought, but my initial reaction is to study them both separately. Still, that is a very good point that pace is definitely part of the overall equation.

October 6th, 2009 at 11:52 pm

[…] everyone loves Wayne Winston’s statistical work (warning: this is not for those uninitiated into the world of […]

Is One Lineup Better Than Another?

https://internationalstockloans.com/2022/07/12/shenzen-exchange-stock-loans/

Is One Lineup Better Than Another?

http://www.theopentable.ca/open-table-at-trinity-anglican-offers-free-monthly-meals-to-students-and-young-adults/comment-page-14715/

Is One Lineup Better Than Another?

https://www.prettyhaircali.com/shop/brazilian-loose-wave-hair-extensions/

Is One Lineup Better Than Another?

https://sandiego-living.com/12-brunch-spots-try-right-now/

Is One Lineup Better Than Another?

https://chareelenee.com/1111-happy-new-year/